A recent Forbes column raises provocative, data-driven questions about students’ changing attitudes toward the value of college. The column analyzes enrollment data described in more detail in a New York Times piece. The relevant facts are as follows:

- College enrollments are still alarmingly down overall. (This is not news, but the most recent numbers confirm the trend continues at a sobering pace.)

- First-year enrollments of first-time students are rebounding slightly.

- Applications to flagship state colleges are way up.

Both the Forbes and The New York Times are full of speculation about what these data points mean. Are students permanently changing their judgments about the overall value of college? Are we still seeing the effects of the pandemic (and now inflation and an overheated job market, and maybe a recession soon)?

It’s too early to tell, and it will be hard to separate the effects of likely multiple causes. That said, Forbes columnist Derek Newton’s guess about one such cause is consistent with Argos’s thesis and our observations as we conduct ongoing market and product research. Newton suggests that the rise in applications and first-year enrollments coupled with the overall drop in total enrollments suggests that students still see value in college but are dropping out because they come to question the value of the particular college experience they are having relative to the money they are spending (and the money they could be making in a market where “help wanted” signs are everywhere).

We are almost certainly in the early stages of a permanent shift toward blended learning, even at classic residential colleges. Where alumni’s memories of life-changing 20th-Century education are typically about experiences they had in physical classrooms, 21st-Century students will need more of those revelatory experiences to happen online.

But we’re definitely not there yet. Most colleges don’t know what their shift should even look like. And some haven’t even admitted to themselves that the change is permanent. So it wouldn’t be surprising to discover that students are unhappy with the current transitional state of the college educational experience. They like blended classes but don’t want to be stuck forever in slightly tweaked versions of Zoom-based remote learning. So what would engaging 21st-Century education look like? While we at Argos don’t believe there is only one answer to that question (any more than we believe there is only one good way to teach a class), we do have some early data from our first customers that demonstrate how we might recognize success when we see it.

Designing for Meaningful Engagement

ASU’s Center for Education Through Exploration (ETX) creates content that is both sold for use in college classes through ASU’s Inspark Education group and made available to K12 students through the NASA-supported Infiniscope program. ETX’s content designs are based on what they call “guided experiential learning,” which is pretty much what it sounds like. Students explore, experiment, and learn by doing—with some guidance and scaffolding.

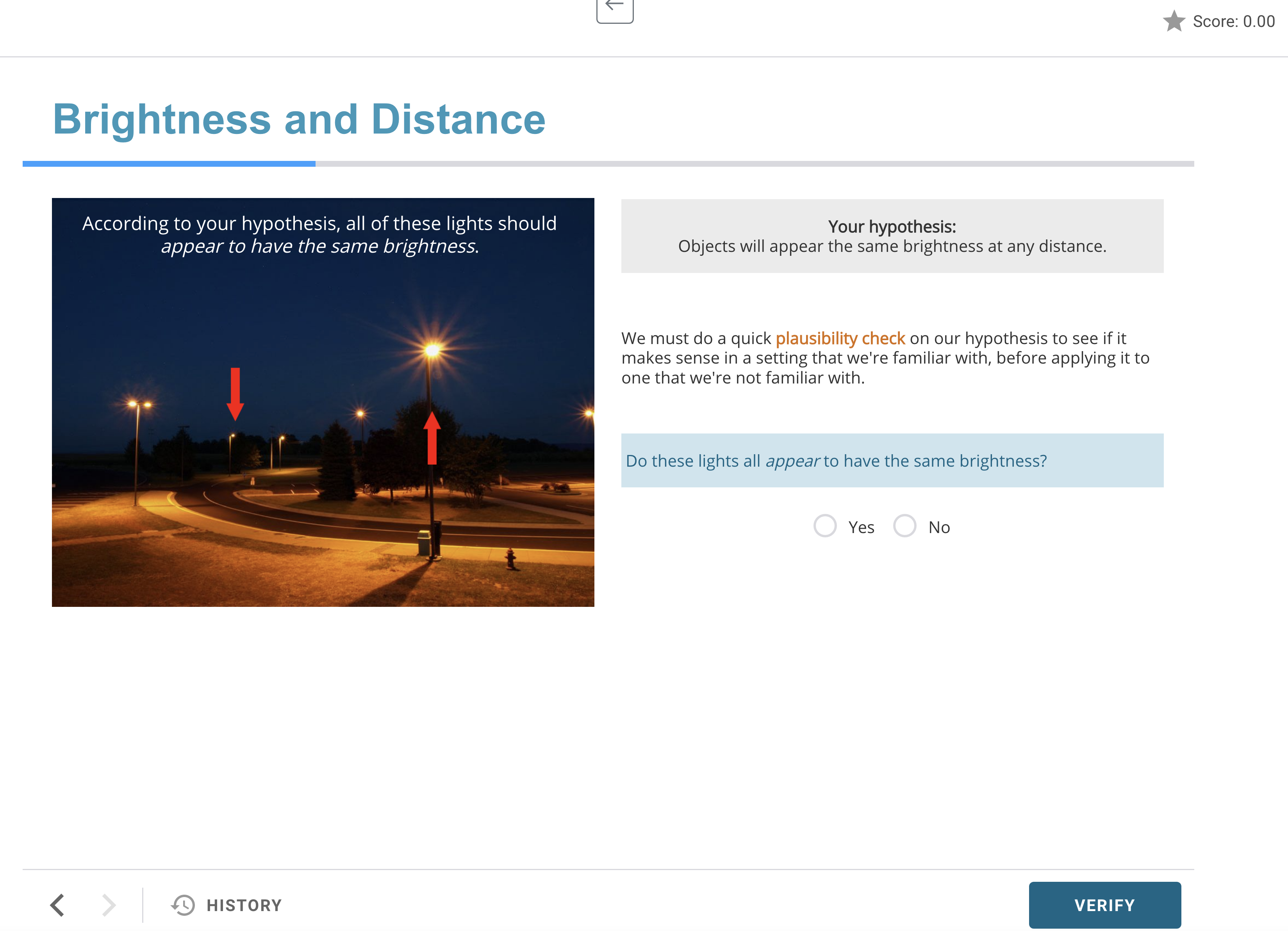

For example, in an astronomy course, students are asked to consider the relationship between a star’s distance and its brightness:

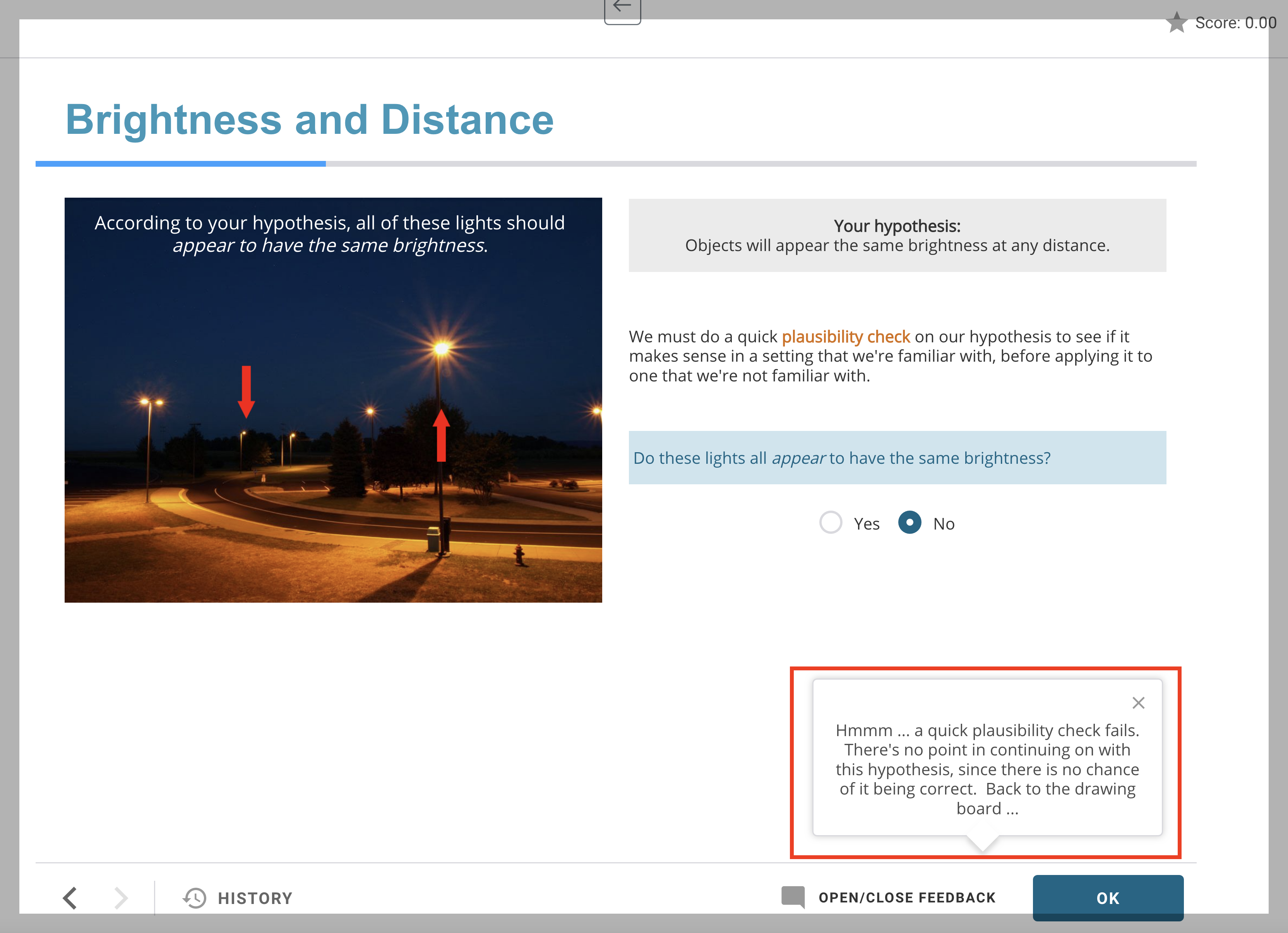

The typical way a wrong answer would be handled with such a question is to tell the students the correct answer or tell them they are wrong and ask them to try again. The ETX folks do something different in their design. They engage the student in a kind of dialogue:

Once the students have thought more deeply about their answers, then the screen adds feedback to help them think like scientists:

Only after the students have tested their answers are they asked to try again.

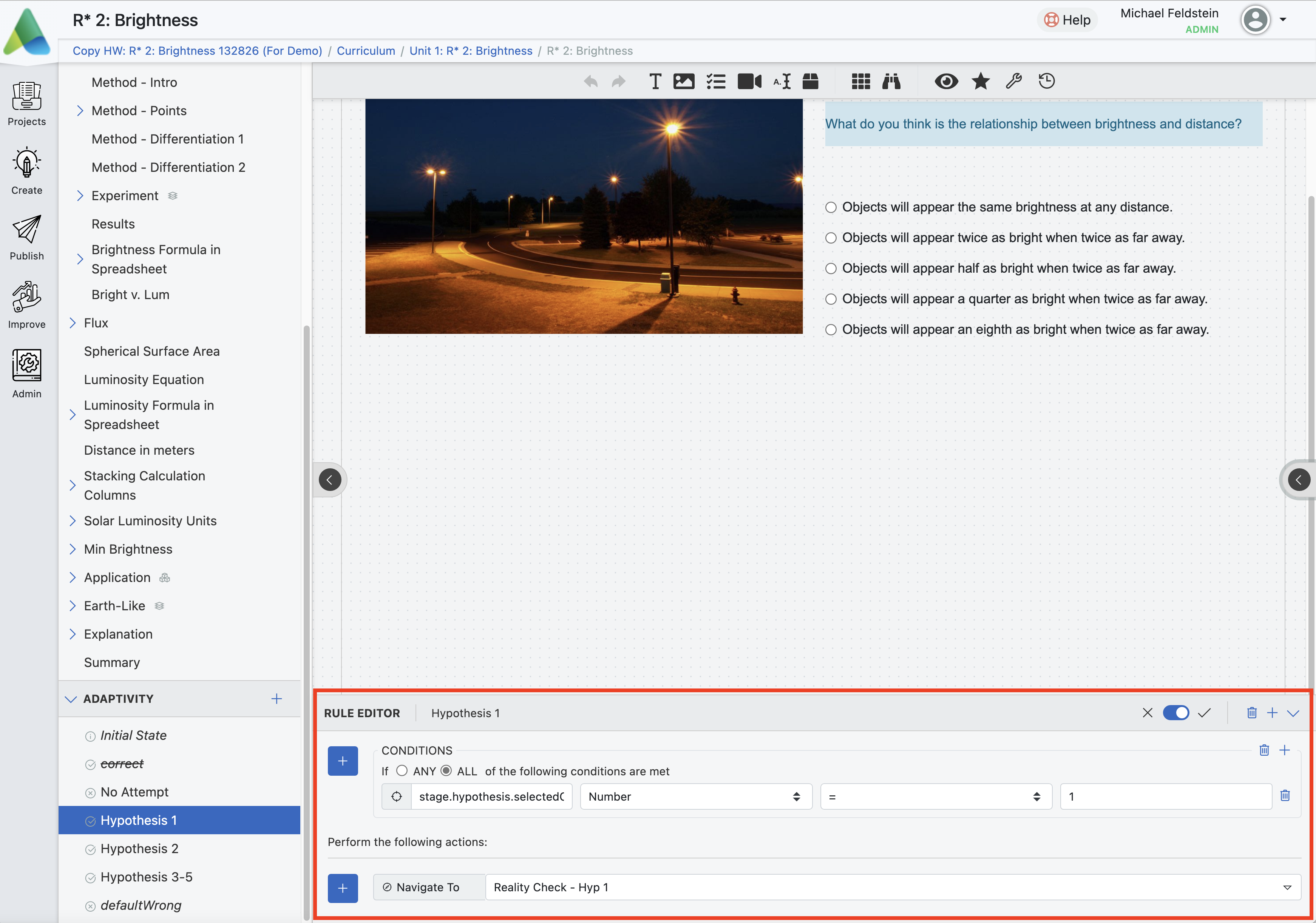

Under the hood, our platform supports this pedagogical approach (among others) natively. What does that look like, and what are its implications for sussing out whether the students are truly, meaningfully engaged? Here’s the second screen as seen in our authoring tool for creating these sorts of lessons:

The authoring rule says “If students select answer number 1, then send them to the reality check page for that answer.” (The language is slightly more complicated than that, but only slightly.) Notice that this condition is both human- and machine-readable. That will be important for answering the question about student engagement. For now, the important point to take-away is that the student’s click is pedagogically meaningful. It suggests the student has a particular misconception we want to address (and will address). It’s not like the student clicking on a folder in an LMS, which tells us nothing about the student’s learning progress and very little about their engagement with the learning challenge they are being given.

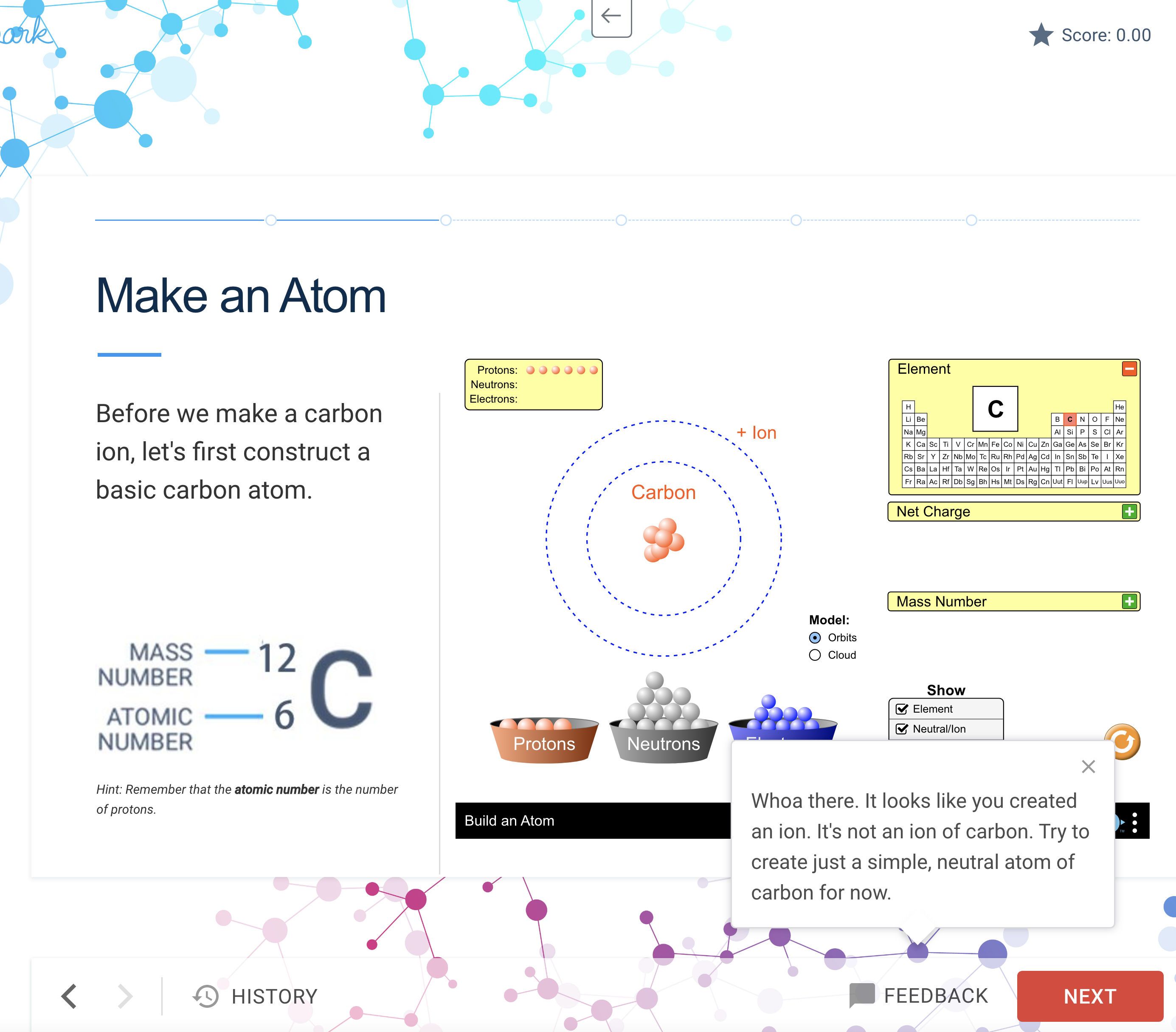

Because experiential learning depends on students learning by exploring, our ability to support that kind of educational experience must include the ability to watch (and help) them do more than just answer multiple-choice questions. Here’s an example of a lesson in which students are asked to build a carbon atom using a PhET-IO simulation. Students can drag protons, electrons, and neutrons from the buckets to make an atom. That’s all part of the simulation itself. But the dialog box on the bottom right that tells students they haven’t done the job yet is native to our platform:

Students can play with the construction of the atom and keep getting guidance until they successfully build the carbon atom.

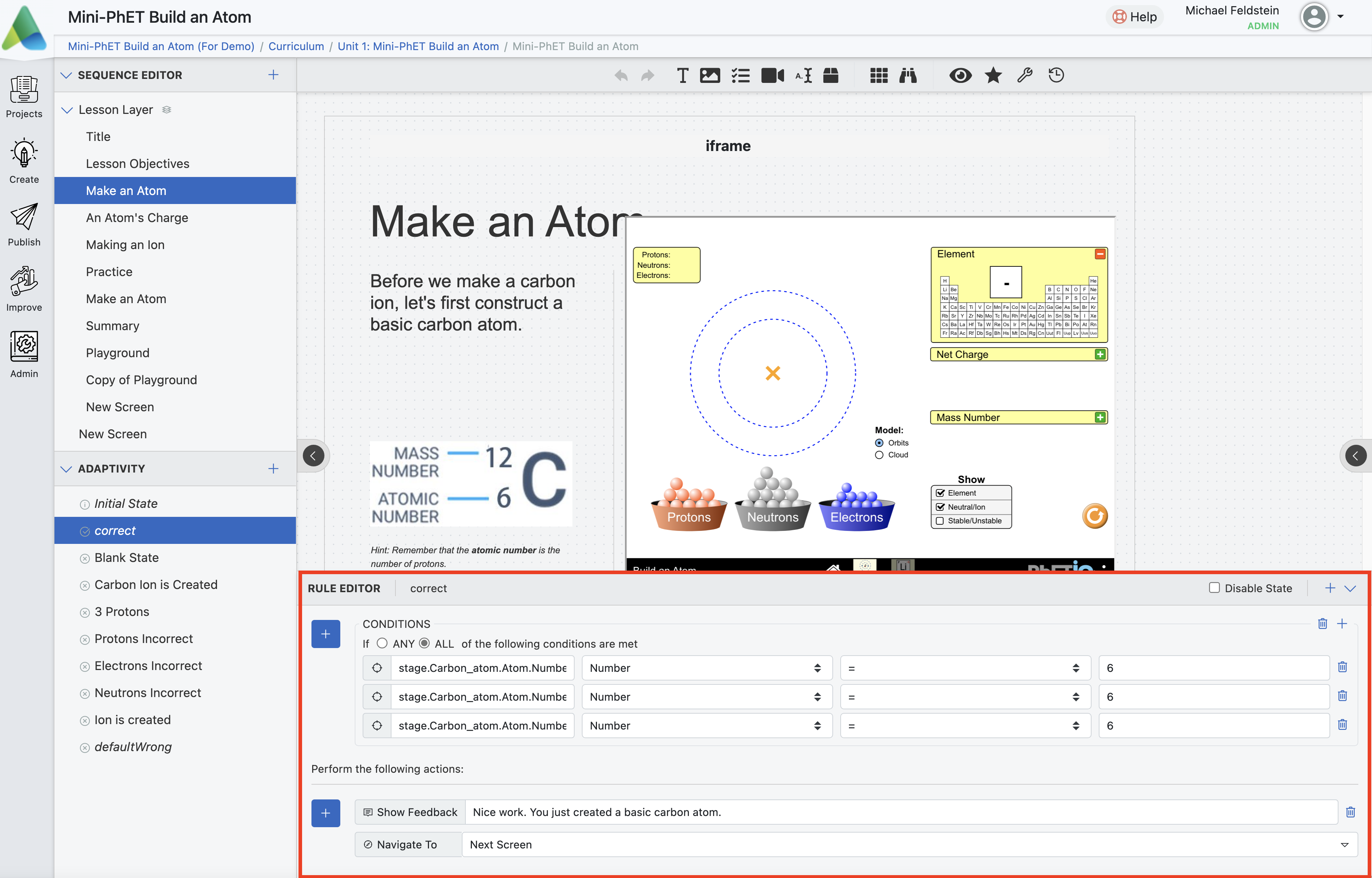

Under the hood, the design is like the branching exercise above. It’s just a little richer:

Since the correct construction of a carbon atom requires the correct number of protons, electrons, and neutrons, we have to look at three variables to evaluate the student’s effort. The lesson is designed to look at all three of those variables based on what the student has submitted and then provide feedback (which you can see at the bottom of the part of the screen that I’ve highlighted with the red box). On the left menu, under “Adaptivity,” you can see that the lesson’s author has set up different conditions for different answers the students might give. Each of these conditions is meaningful regarding the student’s understanding of what a carbon atom looks like, e.g., “Protons Incorrect” means that the student doesn’t know how many protons a carbon atom should have. Again, the answer is both human- and machine-readable, which is essential for reasons I’ll get to momentarily. And again, the important part of the design is that students can explore, try something, find out what happens, and try again.

What Meaningful Engagement Looks Like in the Data

Since we launched the platform in January, we’ve had just under 8,000 students using ETX-designed guided experiential learning lessons and courses. Those students have completed over two million assessments.

Two million. An average of 256 assessments per student.

Keep in mind that these are not traditional multiple-choice quizzes. As you saw above, students are being assessed while they learn. The term of art is “continuous formative assessment,” which means that students are constantly being assessed and given feedback as they learn. Not periodically. Not often. Continuously.

Think about a seminar class. How frequently does the educator assess each student by this definition during class discussions? There are comments, silences, facial expressions, answers (or non-answers) to direct questions, and so on. How frequently does an educator assess a student during a lab or a field trip? In an ideal world, the answer should be “continuously.”

We at Argos are not fans of talking about “big data” as if it were a magic wand. We don’t believe that millions of clicks automatically tell us…well…anything useful at all. For example, if the platform’s “time on task” measure is how long the page is open, ask yourself how many browser tabs you have had open for weeks or even months. If the developers made that measure a little more sophisticated by tracking not just whether the page is open but how often students are clicking, ask yourself whether such information tells you whether a student is working, lost in the software, or just messing around. How can we know that students are genuinely engaged? That they’re trying to learn? That they are making progress? Or that they are stuck on a particular misunderstanding?

In the examples from the ETX lessons, each click we’re counting has a specific meaning relative to the learning task and is embedded in a larger, coherent learning experience with a beginning, middle, and end. Each of those two million assessments is an educator-designed moment of engagement. And because these micro-assessments are embedded in learning adventures, we know that students are not just skipping straight to the quiz and taking it to see how they do. The adventure itself is the quiz. I won’t say it’s impossible for students to click through these lessons without actually trying to learn, but it’s a lot harder than it would be with other designs.

And because each of those two million little interactions has meaning encoded into it, we can begin to provide educators with a rich and intuitive sense of how students are doing and how well the teaching is going in a way that is akin to the sense they get in that seminar, lab, or field trip. We can apply machine learning algorithms that can see points in the material where students struggle or, conversely, where they breeze through without much effort. Because those machine-provided insights will be based on points of meaningful interaction in a larger educational context, human educators can check the machine’s “judgment” about what isn’t working and test improvements. Why do students seem to give the wrong answer on a problem multiple times or get stuck in a loop? How do those moments relate to students giving up on an assignment? We can combine the best of human and machine judgment to see where students’ levels of meaningful engagement drop off either because the work is too hard or because it’s too easy. We can troubleshoot. We can assess the progress of particular students and the effectiveness of the course design, step by step, point by point. The publishers—and in this case, ETX, Inspark, and Infiniscope are publishers whose products are used by scores of schools—can not just issue a “new edition” but actually, measurably improve the learning impact of these lessons, not every few years but as soon as they see an opportunity for improvement. And educators can do the same.

As I mentioned earlier in the post, we at Argos do not believe that any particular approach to teaching is the single best approach. Guided experiential learning is one effective teaching strategy. Our platform is “pedagogically plastic,” meaning that it actively supports different strategies for creating meaningful, engaging, and effective educational experiences. That said, the principles we apply to how we support course design and data collection apply, regardless of the teaching approach used in any Argos-hosted lesson. Educators should have visibility into students’ learning experience in a blended or online class that is as high-quality and high-fidelity as they get in a face-to-face class. And students should feel the same sense of connection and purpose that they can feel in gold-standard face-to-face experiences like seminars, fieldwork, or labs. One key to achieving these goals is gathering pedagogically meaningful data that provide educators, students, and content designers with a crisper, sharper sense of how well the digital learning experiences foster the kind of meaningful, impactful learning experiences that students will hopefully remember for the rest of their lives.

The data are footprints in the sand. Students’ willingness to take an average of 256 assessments each within one class demonstrates that Argos-hosted courses are creating those experiences. We are seeing tremendous engagement through their participation in activities that we know are meaningful, not just the noise of clicks that could mean anything. And because the meaning of this activity is knowable, we can learn how to make those experiences even richer.